Understanding the Brain’s Algorithms Through the Lens of its Physical Constraints

Everything the human brain is capable of is the product of a complex symphony of interactions between many distinct signaling events and myriad of individual computations. The brain’s ability to learn, connect abstract concepts, adapt, and imagine, are all the result of this vast combinatorial computational space. Even elusive properties such as self-awareness and consciousness presumably owe themselves to it.

This computational space is the result of thousands of years of evolution, manifested by the physical and chemical substrate that makes up the brain — its ’wetware’. Physically, the brain is a geometric spatial network consisting of about 86 billion neurons and 85 billion non-neuronal cells. There is tremendous heterogeneity within these numbers, with many classes and sub-classes of both neurons and non-neuronal cells exhibiting different physiological dynamics and morphological (structural) heterogeneity that contribute to the dynamics. A seemingly uncountable number of signaling events occurring at different spatial and temporal scales interacting and integrating with each other is the source behind its complexity and the functions that emerge.

To get a sense of the sheer size of the numbers that make up this computational space, a first order approximation of the total number of synapses is roughly about ten quadrillion. Individual neurons have been estimated to have order of magnitude 10,000’s to 100,000’s synapses per neuron. The exact number of synapses varies significantly during the course of a person’s lifetime and brain region being considered. For example, pyramidal neurons in the cortex have been estimated to have about 32,000 synapses, interneruons, which are the computational workhorses of the brain, between 2,200 and 16,000, neurons in the rat visual cortex have about 11,000 per neuron, and neurons in other parts of the cortex in humans have roughly 7,200 synapses.

Yet, despite a size that is intuitively difficult to grasp, this computational space is finite. It is limited by the the physical constraints imposed on the brain.

The thesis we considered in this preprint is that taking advantage of these constraints can guide the analyses and interpretation of experimental data and the construction of mathematical models that aim to make sense about how the brain works and how cognitive functions emerge. Specifically, we proposed that there exists a fundamental structure-function constraint that any theoretical or computational model that makes claims about relevance to neuroscience must be able to account for. Even if the intent and original construction of the model do not not take this constraint into consideration.

Testing candidate theoretical and computational models has to rely on the use of mathematics as a unifying language and framework. This lets us build an engineering systems view of the brain that connects low-level observations to functional outcomes. It also lets us generalize beyond neuroscience. For example, understanding the algorithms of the brain separately from the wetware that implement them will allow these algorithms to be integrated into new machine learning and artificial intelligence architectures. As such, mathematics needs to play a central role in neuroscience, not a supporting one. To us this intersection between mathematics, engineering, and experiments reflects the future of neuroscience as a field.

There must also be plausible experimentally testable predictions that result from candidate models. A particular sensitivity is that the predictions of mathematical models may exceed the expressive capabilities of existing experimental models. This motivates the push for continuously advancing experimental models and the technical methods required to interrogate them. Abstraction (for generalization) and validation, through experimental models to humans, and computational and theoretical models to machines, both support a systems-approach building on constraints while modeling behavior.

We briefly explore these idea in the context of a specific set of fundamental structure-function constraints.

A fundamental constraint imposed by neural structure and dynamics

Any theoretical or computational model of the brain, regardless of scale, must be able to account for the constraints imposed by the physical structures that bound neural processes. This is a reasonable requirement if the claims being made by a model are that the brain actually works in the way the model is describing it. The conditions that determine these physical constraints are informed by neurobiological data provided by experiments.

One very basic constraint is the result of the interplay between anatomical structure and signaling dynamics. The integration and transmission of information in the brain are dependent on the interactions between structure and dynamics across a number of scales of organization. This fundamental structure-function constraint is imposed by the geometry (connectivity and path lengths) of the structural networks that make up the brain at different scales, and the resultant latencies involved with the flow and transfer of information within and between functional scales. It is a constraint produced by the way the brain is wired, and how its constituent parts necessarily interact, e.g. neurons at one scale and brain regions at another.

The networks that make up the brain are physical constructions over which information must travel. The signals that encode this information are subject to processing times and signaling speeds (conduction velocities) that must travel finite distances to exert their effects — in other words, to transfer the information they are carrying to the next stage in the system. Nothing is infinitely fast.

Furthermore, the latencies created by the interplay between structural geometries and signaling speeds are generally at a temporal scale similar to the functional processes being considered. So unlike turning on a light switch, where the effect to us seems instantaneous and we can ignore the timescales over which electricity travels, the effect of signaling latencies imposed by the biophysics and geometry of the brain’s networks cannot be ignored. They determine our understanding of how structure determines function in the brain, and how function modulates structure, for example, in learning or plasticity.

While a model or algorithm does not explicitly need to take this fundamental structure-function constraint into account, it must have an implicit inclusion of constraint dependencies in order to be relevant to neurobiology. In other words, additional ideas and equations should be able to bridge the model’s original mathematical construction to an explicit dependency on the structure-function constraint. For example, a model that relies on the instantaneous coupling of spatially separable variables can never be a model of a process or algorithm used by the brain because there is simply no biological or physical mechanism that can account for such a required mathematical condition. The requirement that any realistic candidate model of a brain algorithm be able to accommodate the structure-function constraint is simply the result of the physical make up and biophysics of biological neural networks.

Competitive-refractory dynamics: An example of a model that takes advantage of structural-functional constraints

We recently described the construction and theoretical analysis of a framework we called the competitive-refractory dynamics model that we published in Neural Computation that is derived from the canonical neurophysiological principles of spatial and temporal summation. The dependency of this model on the fundamental structure-function constraint is explicit in its construction and equations. In fact, the entire theory takes advantage of the constraints that the geometry of a network invokes on latencies of signal transfer on the network. Yet, it is also not specific to just neurobiological networks. It is a mathematical abstraction applicable to any physically constructible network.

The framework models the competing interactions of signals incident on a target downstream node (e.g. a neuron) along directed edges coming from other upstream nodes that connect into it. The model takes into account how temporal latencies produce offsets in the timing of the summation of incoming discrete events due to the geometry (physical structure) of the network, and how this results in the activation of the target node. It captures how the timing of different signals compete to ‘activate’ nodes they connect into. This could be a network of neurons or a network of brain regions, for example. At the core of the model is the notion of a refractory period or refractory state for each node. This reflects a period of internal processing or inability to react to other inputs at the individual node level. The model does not assume anything about the internal dynamics that produces this refractory state, which could include an internal processing time during which the node is making a decision about how to react.

In a geometric network temporal latencies are due to the relationship between signaling speeds (conduction velocities) and the geometry of the edges on the network (i.e. edge path lengths). A key result from considerations of the interplay between network geometry and dynamics is the refraction ratio — the ratio between the refractory period and effective latencies. This ratio reflects a necessary balance between how fast information (signals) are propagating throughout the network, relative to how long each individual node needs to internally process incoming information. It is an expression that describes a local rule (at the individual node scale) that governs the global dynamics of the network. We can then use sets of refraction ratios to compute the causal order in which signaling events trigger other signaling events in a network. This allows us to compute (predict) and analyze the overall dynamics of the network.

The last important consideration for our purposes here, is that a theoretical analysis of the model resulted in the derivation of a set of (upper and lower) bounds that constrained a mathematical definition of efficient signaling for a structural geometric network running the dynamic model. This produced a proof of what we called the optimized refraction ratio theorem, which turned out to be relevant to the analyses of experimental data.

Competitive-refractory dynamics in real-world applications

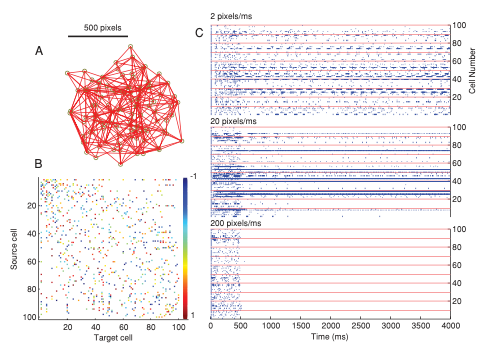

The effects of the structure-function constraint can be seen in experimental results through analyses that make use of the competitive-refractory dynamics model. We have shown in numerical simulations that network dynamics can completely breakdown in geometric networks (such as biological neural networks) if there is a mismatch in the refraction ratio (see Fig. 3 in this paper also published in Neural Computation — reproduced below).

In numerical simulation experiments shown in the figure above, we stimulated a geometric network of 100 neurons for 500 ms with a depolarizing current and then observed the effects of modifying the refraction ratio. While leaving the connectivity and geometry (edge path lengths) unchanged, we tested three different signaling speeds (action potential conduction velocities) at 2, 20, and 200 pixels per ms. We then observed the network to see how it was able to sustain inherent recurrent signaling in the absence of additional external stimulation. At the lowest signaling speed, 2 pixels per ms, we saw recurrent low-frequency periodic activity that qualitatively resembles a central pattern generator. When we increased the speed to 20 pixels per ms, there was still some recurrent activity but it was more sporadic and irregular. At 200 pixels per ms however, there was no signaling past the stimulus period. All the activity died away. This is the direct consequence of a mismatch in the refraction ratio: When signals arrive to quickly, the neurons have not had enough time to recover from their refractory periods and the incoming signals do not have an opportunity to induce downstream activations. The activity in the entire network dies.

Using the same refraction ratio analysis, in a paper in Nature Scientific Reports we showed that efficient signaling in the axon arbors inhibitory Basket cell neurons depends on a trade-off between the time it takes action potentials to reach synaptic terminals (temporal cost) and the amount of cellular material associated with the wiring path length of the neuron’s morphology (material cost).

Dynamic signaling on branching axons is important for rapid and efficient communication between neurons, so the cell’s ability to modulate its function to achieve efficient signaling is presumably important for proper brain function. Our results suggested that the convoluted paths taken by axons reflect a design compensation by the neuron to slow down action potential latencies in order to optimize the refraction ratio. The ratio is effectively a design principle that gives rise to the geometric shape (morphology) of the cells. Basket cells seem to have optimized their morphology to achieve an almost theoretical ideal of the refraction ratio.

In another paper published in Frontiers in Systems Neuroscience we again used the same ideas and analyzed the signaling dynamics of the worm C. elegans connectome to infer a purposeful connectivity design to how the nervous system of the worm has evolved to be wired. We simulated the dynamics of the C. elegans connectome using the competitive-refractory dynamics model that took advantage of the known spatial (geometric) connectivity of the worm. We studied the dynamics which resulted from stimulating a chemosensory neuron (ASEL) in a known feeding circuit, both in isolation and embedded in the full connectome. We were able to show that contralateral motor neuron activations in ventral and dorsal classes of motor neurons emerged from the simulations in such a way that they were qualitatively similar to rhythmic motor neuron firing pattern associated with the locomotion. Part of our interpretation of the results was that there is an inherent — and we proposed — purposeful structural wiring to the C. elegans connectome that has evolved to serve specific behavioral functions.

There is a really important lesson in thinking about how the brain is physically constrained …

The main takeaway message from all this work is that what seems like physical limitations the brain is subject to — such as its geometry, (slow) finite signaling speeds, and the resultant signaling and information latencies as a result — may not be limitations at all. In fact, one of the main issues with artificial neural networks is that they are highly under-constrained, and so it is not clear how one should go about optimizing them to maximize information processing and learning. The biological brain, it seems, because it is wet and squishy and made of ‘wetware’, has evolved to take advantage of such constraints in the algorithms the brain uses. Understanding the brain within the context of its constraints offers the opportunity to understand how the brain works, and what happens when it does not in neurological and cognitive disorders. And it offers new theoretical and computational ideas to pursue for the development of next generation machine learning algorithms and artificial intelligence.